Table of Contents

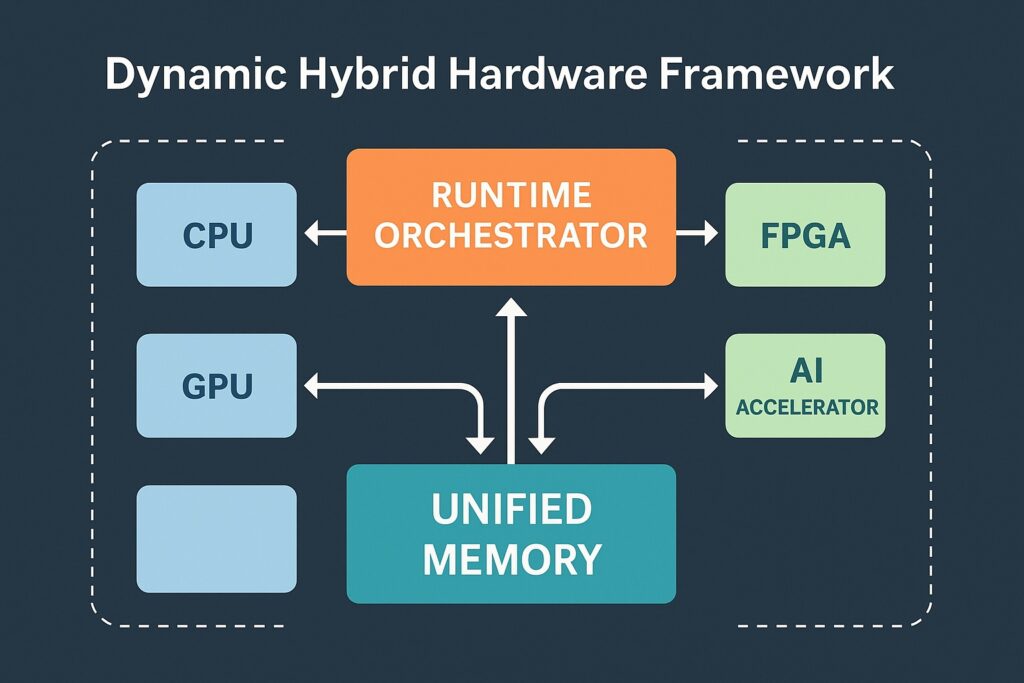

What is a Dynamic Hybrid Hardware Framework?

A Dynamic Hybrid Hardware Hardware Framework is an adaptive architecture that unifies disparate compute units behind a common runtime. Tasks can migrate in real time between general-purpose cores and accelerators based on throughput, latency, and power constraints—without forcing developers to rewrite entire applications.

Related on LookPK: AI Hardware Security Module

Core Architecture & Design Patterns

1) Runtime Orchestrator

Monitors workloads and telemetry (latency, utilization, thermals) to schedule and migrate tasks across CPUs/GPUs/accelerators.

2) Unified Memory & Data Movement

Zero-copy buffers, DMA pipelines, and coherent address spaces reduce copies and keep accelerators fed.

3) Accelerator Abstraction Layer

Common APIs hide device specifics while exposing performance knobs (batch size, concurrency, precision modes).

4) Reconfiguration Engine

For FPGAs/Configurable NPUs, hot-swap hardware kernels to adapt to changing workloads.

Helpful external references (do-follow):

Heterogeneous System Architecture (HSA),

Khronos SYCL,

NVIDIA CUDA,

AMD ROCm

Key Benefits

- Performance: Assign the most compute-intensive kernels to the most capable accelerators.

- Latency: Keep hot paths close to the data; exploit on-chip memory and parallelism.

- Energy Efficiency: Right-size execution; downshift to low-power units when feasible.

- Scalability: Scale from embedded edge devices to data center racks with the same orchestration logic.

- Resilience: Fallback to CPU implementations if accelerators are saturated or unavailable.

Use Cases

AI & Deep Learning

Convolutions, attention blocks, and matrix multiplies run on GPUs/NPUs, while CPUs manage control flow and pre/post-processing.

Edge Computing & IoT

Run on-device inference with failover to the cloud; balance latency, bandwidth, and battery life.

5G / Networking

Hybrid beamforming, packet processing, and encryption pipelines split across programmable logic and general cores.

Autonomous Systems

Real-time sensor fusion across CPU, GPU, and dedicated accelerators for perception, planning, and control.

Challenges & Best Practices

- Data Coherency: Use unified memory or explicit sync to avoid stale buffers.

- Granularity: Migrate at task sizes where overheads don’t erase gains.

- Tooling: Prefer portable models (e.g., SYCL) or vendor stacks (CUDA/ROCm) with mature profilers.

- Observability: Instrument latency, throughput, and thermals to guide adaptive policies.

- Fallback Paths: Maintain CPU equivalents for reliability and debuggability.

More on hybrid AI approaches: Hybrid AI Computing Models

Future Directions

Expect increased use of RL-based scheduling, domain-specific compilers, tighter CPU-GPU memory coherence, and new hybrids that pair conventional accelerators with neuromorphic or quantum co-processors—all coordinated by a robust Dynamic Hybrid Hardware Framework.

🏁 Final Thoughts

The Dynamic Hybrid Hardware Framework isn’t just another chapter in the AI story — it’s a rewrite of the whole book. By fusing logic, adaptability, and physical computing power, we’re stepping into an era where machines can think and react more like living systems.

In a few years, your smartphone, car, or even home network could be running on hardware that understands your habits better than any software update ever could.

And that’s not science fiction — that’s the next logical step in intelligent computing.